# NO₂ monitoring

TROPOMI (TROPOspheric Monitoring Instrument) (opens new window) is the name of a sensor on board of the Sentinel-5 Precursor (S5P) satellite (opens new window), developed to monitor atmospheric chemistry.

This use case analyses Sentinel 5P imagery, focusing in particular on NO₂ measurements. Compared to other variables measured by TROPOMI, NO₂ is of high interest not only because of its direct relation with environmental health, but also because the main sources are typically known, because it is not transported over long distances and because the total column values measured by TROPOMI are strong indication of the ground level values.

This document describes how to analyze NO₂ data on openEO Platform using the Python, JavaScript and R client. Additionally, we've prepared a basic Jupyter Notebook (opens new window) for Python and much more advanced R and Python Shiny apps that you can run to analyze and visualize the NO₂ data in various ways.

# Shiny apps (R and Python)

In the Shiny app the NO₂ values can be analyzed globally over a full year. The analysis allows the user to set threshold values for the cloud cover. Gaps due to the cloud removal are filled by a linear interpolation. Noise gets removed by computing 30-day smoothed values, using kernel smoothing of the time series.

The Shiny app allows for three different modes:

- Time series analysis / comparison against locally measured data

- Map visualization for individual days

- Animated maps that highlight differences in space and time

Currently, the Shiny apps can only run on your local computer but we are looking into offering a hosted version, too. There's also a guide that explains how to run openEO code in a Python Shiny app in general.

You can find the full source code and documentation of the apps in their respective repositories:

# Time-Series Analyser

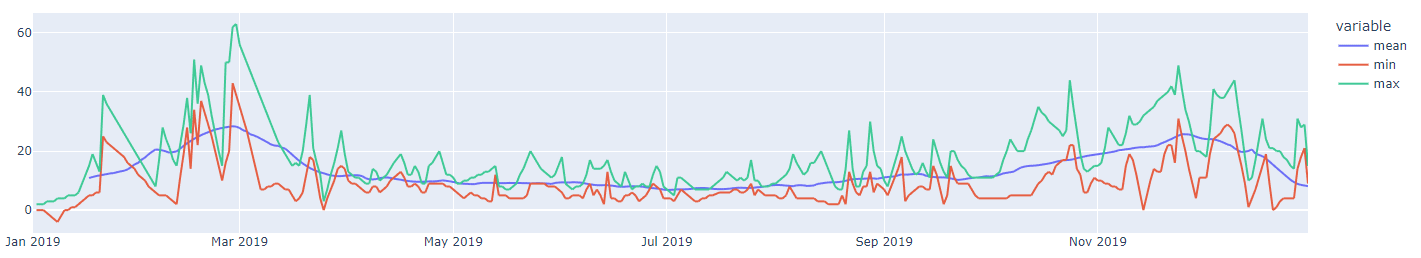

The Time-Series Analyser allows one to see the "reduced" time series (min/max/smoothed mean) of Sentinel 5P NO2 data from a given region. Basically, to use this function, the user can pass the coordinates of the bounding box of the area of interest, which are shown in a dynamic map; but also the time frame and the cloud cover to be considered in the computation. The analyser can also do a comparison against locally measured data (for selected areas only).

# Map Maker for one Snapshot

If the user desires to look at NO2 data at one given point in time, this function is the developed for this purpose. This second option in the Shiny app allows one to visualize how does a country's pattern in NO2 looks like in a given time, following S5P NO2 data.

# Spacetime Animation

Here, the user can create and visualise their own spatio-temporal animation of S5P NO2 data. Given a starting and ending date, as well as the quality flag for cloud cover and a given country name, the user may have their own personalised spacetime GIF ready for their usage.

# Basic NO₂ analysis in Python, R and JavaScript

openEO Platform has multiple collections that offer Sentinel-5P data, e.g. for NO₂:

- TERRASCOPE_S5P_L3_NO2_TD_V1 (opens new window) - daily, hosted by VITO, pre-processed to remove clouds etc.

- TERRASCOPE_S5P_L3_NO2_TD_V1 (opens new window) - monthly, hosted by VITO, pre-processed to remove clouds etc.

- TERRASCOPE_S5P_L3_NO2_TD_V1 (opens new window) - yearly, hosted by VITO, pre-processed to remove clouds etc.

- SENTINEL_5P_L2 (opens new window) - Level 2 data, hosted by Sentinel Hub

SENTINEL_5P_L2 also contains additional data such as CO, O₂, SO₂ and you can also experiment with those. CO is also available on VITO in Collections such as TERRASCOPE_S5P_L3_CO_TD_V1.

In this example we'll use the daily composites from the TERRASCOPE_S5P_L3_NO2_TD_V1 collection, which is available starting from end of April 2018.

# 1. Load a data cube

Attention

This tutorial assumes you have completed the Getting Started guides and are connected and logged in to openEO Platform.

Your connection object should be stored in a variable named connection.

First of all, we need to load the data into a datacube. We set the temporal extent to the year 2019 and choose spatial extent, here an area over Münster, Germany.

# 2. Fill gaps

The data cube may contain no-data values due to the removal of clouds in the pre-processing of the collection. We'll apply a linear interpolation along the temporal dimension:

# 3. Smoothen values (optional)

If you want to smoothen the values to get rid of noise for example, we can run a moving average over a certain amount of days over the temporal dimension.

If you want to work on the raw values, you can also omit this step.

The moving_average_window variable specifies the smoothing in number of days.

You can choose it freely, but it needs to be an odd integer >= 3.

In the example below 31 was chosen to smooth the timeseries with a moving average of a full month.

Note

A technical detail here is that we run the moving average as a Python UDF, which is custom Python code. We don't have a pre-defined process in openEO yet that easily allows this and as such we fall back to a UDF. We embed the Python code in a string in this example so that you can easily copy the code, but ideally you'd store it in a file and load the UDF from there.

The Python UDF code (for all client languages!) itself is pretty simple:

from pandas import Series

import numpy as np

N = 31

def apply_timeseries(series: Series, context: dict) -> Series:

return np.convolve(series, np.ones(N)/N, mode='same')

# 4. What do you want to know?

Now it's time to decide what we actually want to compute and get an insight into.

Currently, the data cube at still has 4 dimensions:

The spatial dimensions x and y covering the extent of Münster,

the temporal dimension t covering usually about 365 values of the given year, and

the band dimension bands with just a single label NO2.

As the bands dimension only has a single label it gets dropped automatically during export,

which means we now basically have a series of NO₂ maps, one for each day.

We have several options now:

- Store the daily maps as netCDF file

- Reduce the temporal dimension to get a single map for the year as GeoTIff

- Reduce the spatial dimensions to get a timeseries as JSON

# 4.1 netCDF Export

To store daily maps in a netCDF file, we simply need to store the datacube:

# 4.2 Map for a year as GeoTiff

To get a map with values for a year we need to reduce the temporal dimensions by reducing the values along the temporal dimension.

You can run different reducers such as mean (in the example below), max or min so that

we get a single value for each pixel.

Lastly, you need to specify that you want to store the result as GeoTiff (GTiff due to the naming in GDAL).

# 4.3 Timeseries as JSON

To get a timeseries we need to reduce the spatial dimensions by aggregating them.

You can run different aggregation methods such as mean (in the example below), max or min so that

we get a single value for each timestep.

Lastly, you need to specify that you want to store the result as JSON.

# 5. Execute the process

Regardless of which of the options you chose in chapter 4, you can now send the process to the backend and compute the result. From simplicity, we simply execute it synchronously and store the result in memory. For the GeoTiff and netCDF files you may want to store them into files though. The JSON output you could directly work with.

# Result

If you'd visualize the results of running the timeseries analysis for mean, min and max could results in such a chart: